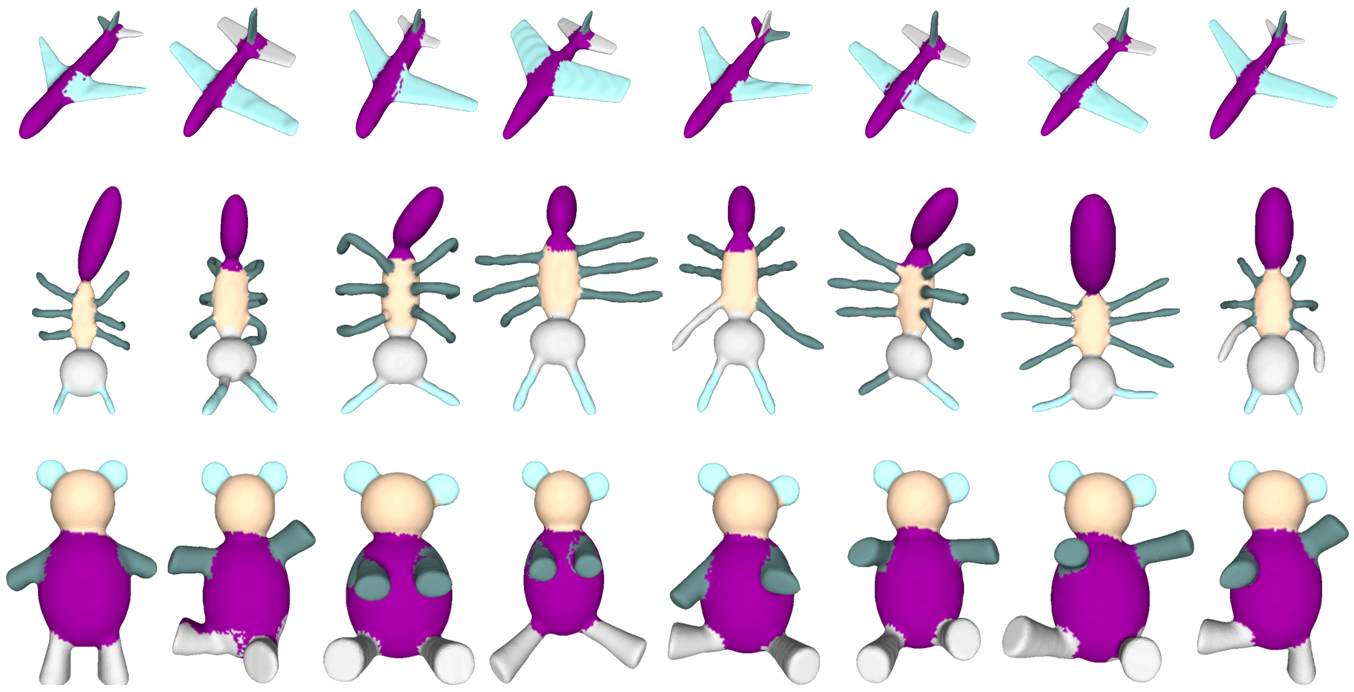

3D shape segmentation is a fundamental computer vision task that partitions the object into labeled semantic

parts. Recent approaches to 3D shape segmentation learning heavily rely on high-quality labeled training datasets.

This limits their use in applications to handle the large scale

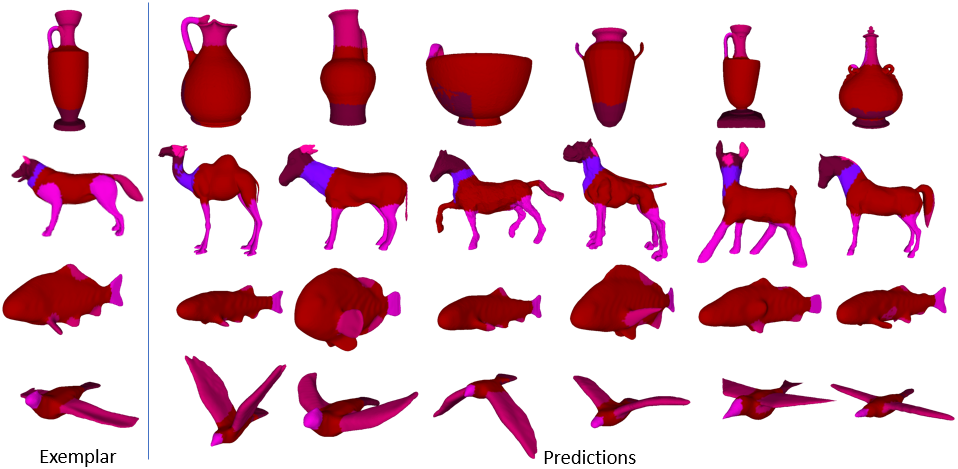

unannotated datasets. In this paper, we proposed a novel

semi-supervised approach, named Robust Learning of OneShot 3D Shape Segmentation (ROSS), which only requires

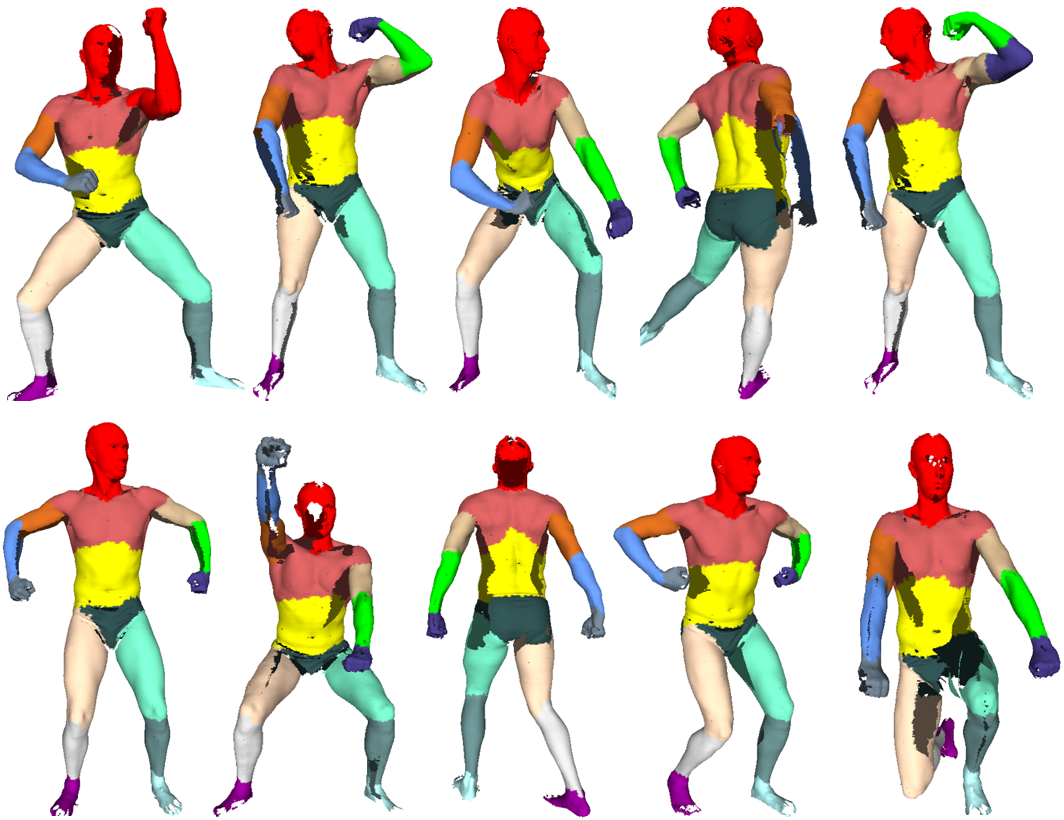

one single exemplar labeled shape for training. The proposed ROSS can generalize its ability from a one-shot training process to

predict the segmentation for previously unseen 3D shape models. The proposed ROSS is composed of

three major modules for 3D shape segmentation as follows.

The global shape descriptor generator is the first module

that utilizes the proposed reference weighted convolution

to learn a 3D shape descriptor. The second module is a

part-aware shape descriptor constructor that can generate

weighted descriptors from a learned 3D shape descriptor

according to semantic parts without supervision. The shape

morphing with label transferring works as the last module.

It morphs the exemplar shape and then transfers labels from

the transformed exemplar shape to the target shape. The

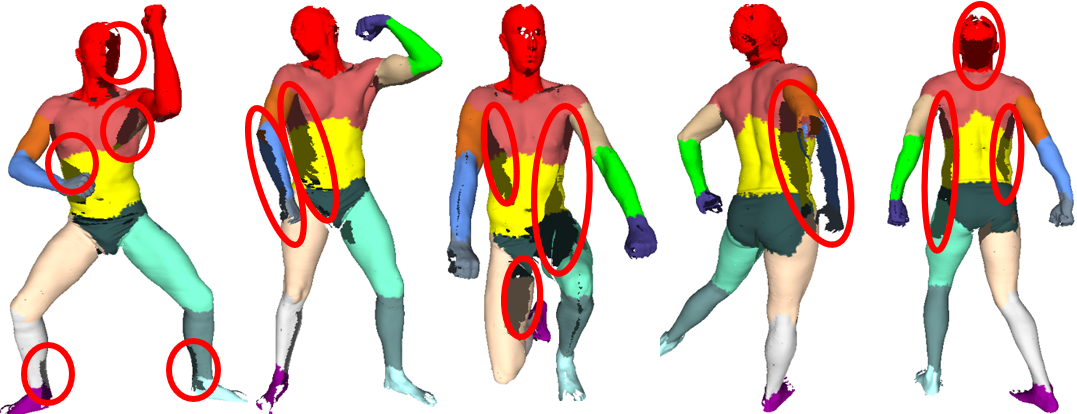

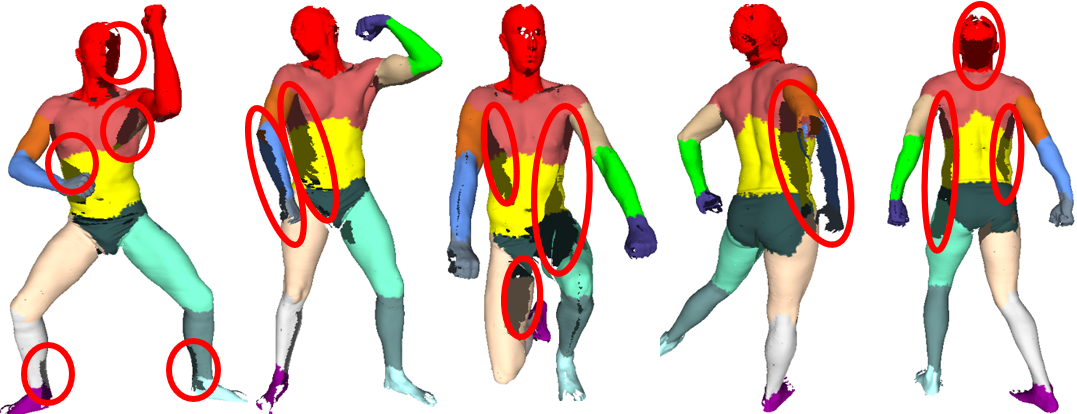

extensive experimental results on 3D mesh datasets demonstrate the ROSS is robust to noise and incomplete shapes

and it can be applied to unannotated datasets. The experiment shows the proposed ROSS can achieve comparable

performance with the supervised method.

|